Picture this: a factory floor manager in Ulsan, South Korea, staring at a wall of legacy Mitsubishi PLCs from the late 2000s. The machines are running fine — decades of reliable operation — but they’re essentially deaf and blind to the rest of the modern digital ecosystem. No data streaming, no remote diagnostics, no predictive alerts. Sound familiar? This exact scenario is playing out in thousands of factories right now, and the race to bridge that gap through IIoT (Industrial Internet of Things) integration is one of the defining manufacturing stories of 2026.

Today, let’s think through this together — what PLC-to-IIoT integration actually looks like in practice, which real companies pulled it off successfully, and what realistic paths exist if your factory isn’t sitting on a greenfield budget.

Why PLC-to-IIoT Integration Is Harder Than It Sounds

PLCs (Programmable Logic Controllers) are the heartbeat of any automated factory. Brands like Siemens S7, Allen-Bradley (Rockwell), Mitsubishi MELSEC, and Omron have dominated shop floors for decades. The challenge? They were designed for closed-loop control, not open data sharing. Most legacy PLCs communicate over proprietary protocols — Modbus RTU, PROFIBUS, EtherNet/IP — that don’t natively speak to cloud platforms or modern analytics stacks.

A 2025 survey by ARC Advisory Group found that over 68% of manufacturing facilities globally still operate PLCs more than 10 years old, with no built-in IIoT capability. The cost of full hardware replacement is prohibitive — we’re often talking $500,000 to several million dollars for a mid-sized line. So the industry pivoted to something smarter: edge gateway bridging.

The Technical Bridge: How Edge Gateways Make It Work

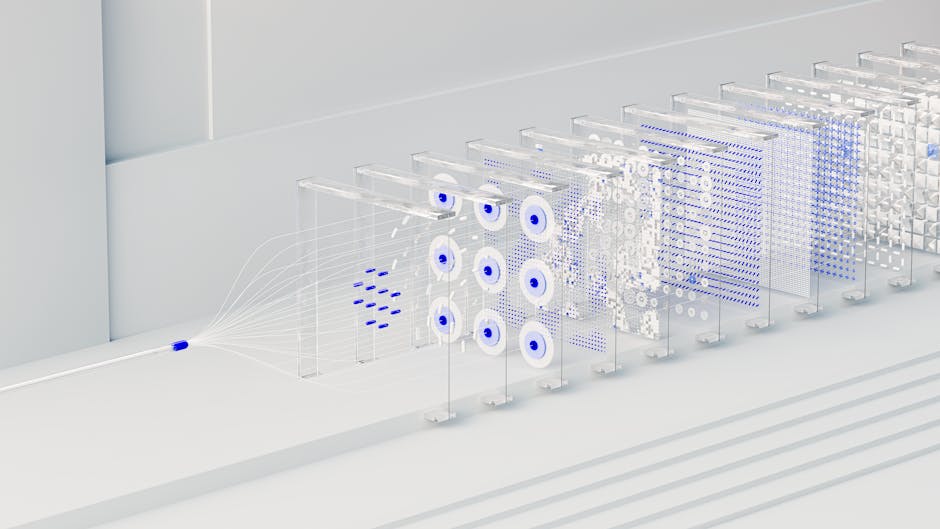

The dominant architecture in 2026 for PLC-IIoT integration looks roughly like this:

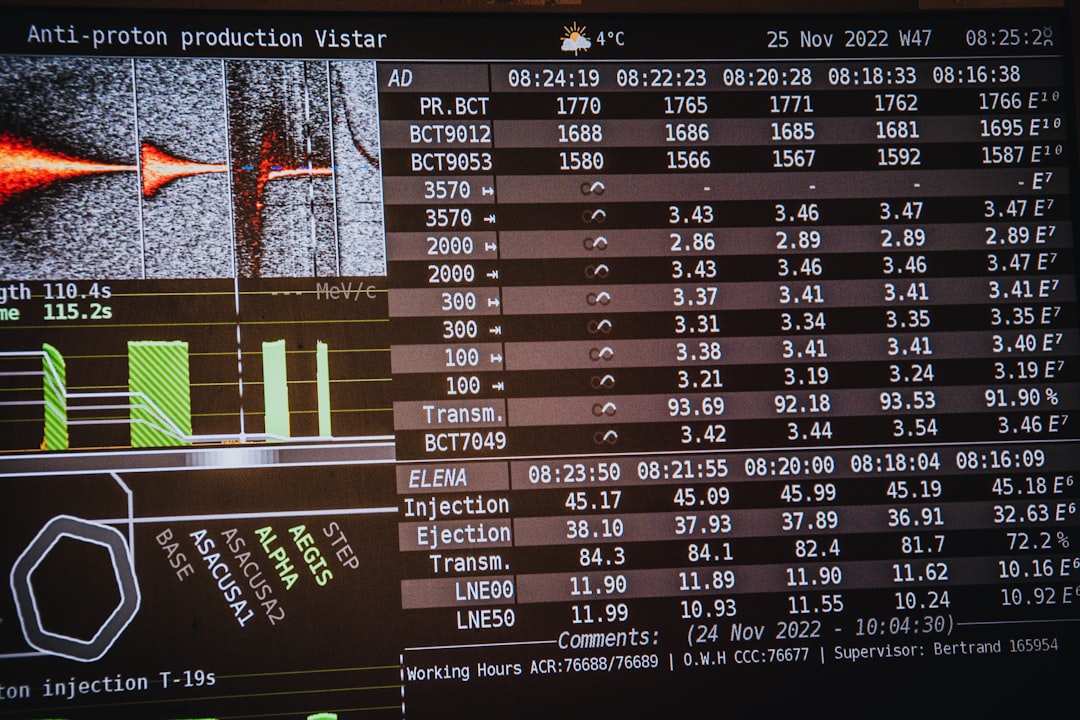

- PLC Layer: Existing PLCs continue controlling machines using their native protocols (Modbus, PROFINET, OPC-UA, EtherNet/IP).

- Edge Gateway Layer: Industrial edge devices — like Siemens SIMATIC IPC, Advantech ADAM series, or Moxa gateways — sit between the PLC and the cloud. They translate proprietary protocols into standardized formats (typically OPC-UA or MQTT over TLS).

- Connectivity Layer: Data travels via 5G private networks, Wi-Fi 6E, or wired Gigabit Ethernet to local edge servers or directly to cloud platforms.

- Cloud/Platform Layer: Platforms like AWS IoT Greengrass, Microsoft Azure IoT Hub, PTC ThingWorx, or Korea’s own Metatron ingest, store, and analyze the data streams.

- Application Layer: Dashboards, predictive maintenance alerts, OEE (Overall Equipment Effectiveness) tracking, and digital twin simulations become live and actionable.

The secret weapon in 2026? OPC-UA (Open Platform Communications Unified Architecture) has finally achieved critical mass adoption. It’s become the lingua franca that lets a 2008 Siemens S7-300 PLC talk to a brand-new AWS cloud analytics pipeline without ripping anything out.

Real-World Case Study 1: Hyundai Mobis, South Korea

Hyundai Mobis’ Asan plant undertook a phased PLC-IIoT integration project between 2023 and early 2026. Rather than replacing their existing Siemens S7 and Fanuc CNC controllers, they deployed Siemens MindSphere edge connectors paired with a private 5G network built in collaboration with SKT (SK Telecom).

The results after full deployment were striking: machine downtime dropped by 23% year-over-year, and predictive maintenance alerts — triggered by vibration and temperature anomalies streamed from PLC sensor data — prevented an estimated 14 major line stoppages in 2025 alone. The total integration cost was approximately ₩4.2 billion KRW (~$3.1M USD), compared to an estimated ₩18 billion for full hardware replacement. ROI was achieved within 26 months.

Real-World Case Study 2: Bosch Rexroth, Germany

Bosch Rexroth’s Lohr am Main facility (hydraulics manufacturing) offers a compelling European example. They faced a patchwork of Allen-Bradley PLCs, legacy Rexroth controllers, and KUKA robotic cells — none of which communicated with each other, let alone the cloud.

Their solution, rolled out through 2025-2026, centered on ctrlX AUTOMATION (their own platform) combined with Kepware’s KEPServerEX as an OPC-UA aggregator. Every PLC’s data now feeds into a unified Bosch IoT Suite dashboard. The standout outcome: they achieved real-time OEE visibility across 47 machines simultaneously, and energy consumption analytics helped reduce per-unit energy cost by 11% — a huge win given Europe’s ongoing energy pricing pressures.

Case Study 3: A Mid-Sized Korean SME — The Realistic Version

Not every story is a Hyundai or Bosch. Let’s talk about Youngbo Tech, a fictional-but-representative SME in Changwon with ~200 employees making precision machined parts. Budget: tight. IT staff: two people. PLCs: Mitsubishi Q-series from 2011.

Their approach in 2025 was refreshingly pragmatic. They used open-source Node-RED running on a Raspberry Pi 4-based edge device (cost: under $200) to poll Mitsubishi PLCs via MC Protocol, convert data to MQTT, and push it to an InfluxDB + Grafana stack hosted on a local NAS server. No cloud subscription fees. No enterprise contracts.

Was it as powerful as Bosch’s setup? No. But they got real-time temperature, cycle count, and alarm monitoring up and running within 6 weeks and under ₩15 million KRW (~$11,000). For an SME, that’s transformational. It’s a great reminder that IIoT doesn’t have to be all-or-nothing.

Realistic Alternatives Based on Your Situation

Here’s where I want to be genuinely helpful rather than just inspiring. Your ideal path depends heavily on budget, PLC age, and internal expertise:

- Budget under $20,000 (SME): Open-source stack — Node-RED + MQTT + InfluxDB + Grafana. Pairs well with Moxa or Advantech sub-$500 gateways. Limited scalability but excellent for proof-of-concept and small lines.

- Budget $50K–$500K (Mid-market): Look at Ignition SCADA by Inductive Automation — a licensing model that doesn’t charge per tag, making it surprisingly affordable at scale. Pairs with OPC-UA for broad PLC compatibility.

- Budget $500K+ (Enterprise): Full platform plays — Siemens MindSphere, PTC ThingWorx, or Azure IoT Hub with custom connectors. Invest heavily in cybersecurity (IEC 62443 compliance) at this tier — a non-negotiable in 2026 given rising OT cyberattacks.

- Legacy PLCs with no Ethernet port: Serial-to-Ethernet converters (like Moxa NPort series) can unlock even ancient RS-232/RS-485 Modbus devices. Don’t write off old iron just yet.

- No internal IT expertise: Consider MES-as-a-Service providers like Sight Machine or Korea’s MiCo (MiCo BioMed’s industrial division) who handle integration end-to-end under a managed service model.

What to Watch Out For in 2026

A few honest cautions as you plan your integration:

- OT Cybersecurity is no longer optional. Connecting PLCs to any network — even internal — opens attack surfaces. The 2025 Düsseldorf automotive supplier ransomware attack (which propagated via an unsecured PLC gateway) cost €47M in production losses. Segment your networks. Seriously.

- Data overload is real. A single PLC can generate thousands of tags. Without a clear analytics strategy upfront, you’ll drown in data and gain no insight. Start with 5-10 KPIs that matter to your operation.

- Vendor lock-in. Some enterprise platforms make it painfully expensive to migrate later. Prioritize OPC-UA compatibility and open APIs.

The bottom line? PLC-to-IIoT integration in 2026 is more accessible than ever — but it still requires clear thinking about your specific constraints, not just copying what the industry giants do. Start small, prove value, and scale deliberately.

Editor’s Comment : What excites me most about where we are in 2026 is that this is no longer exclusively a Fortune 500 game. The democratization of open-source IIoT tools, sub-$500 edge gateways, and cloud platforms with generous free tiers means a 50-person machine shop in Changwon or a family-run auto parts supplier in Ohio can genuinely start their smart factory journey this quarter. The technology is ready. The bigger question — and the more interesting one — is whether your organization’s processes and people are ready to act on the data once it starts flowing. That’s the conversation worth having next.

📚 관련된 다른 글도 읽어 보세요

- Node.js vs Bun vs Deno in 2026: Which JavaScript Runtime Actually Wins on Performance?

- 2026년 React와 Node.js 풀스택 프로젝트 구축 완전 가이드 | 입문부터 배포까지

- 디지털 트윈, 산업 제어 시스템을 어떻게 바꾸고 있을까? 2026년 현황과 실전 활용법

태그: [‘Smart Factory IIoT 2026’, ‘PLC IIoT Integration’, ‘Industrial IoT Case Study’, ‘OPC-UA Edge Gateway’, ‘Predictive Maintenance Manufacturing’, ‘IIoT Korea Smart Factory’, ‘Manufacturing Digital Transformation’]