Picture this: it’s a rainy Tuesday morning, and a logistics manager in São Paulo is watching her real-time fleet dashboard freeze for three agonizing seconds — just long enough to miss a rerouting window that costs the company thousands. The culprit? Every data request was making a round trip to a centralized cloud server thousands of miles away. Now fast-forward to today, 2026, and that same dashboard runs on an edge-native full-stack architecture, processing data milliseconds from the source. The difference is night and day.

Edge computing has graduated from a buzzword to a genuine architectural philosophy — and if you’re building full-stack applications in 2026, understanding how to design for the edge isn’t optional anymore. Let’s think through this together, because the shift is more nuanced than simply “move your server closer.”

What Exactly Is Edge-Based Full-Stack Architecture?

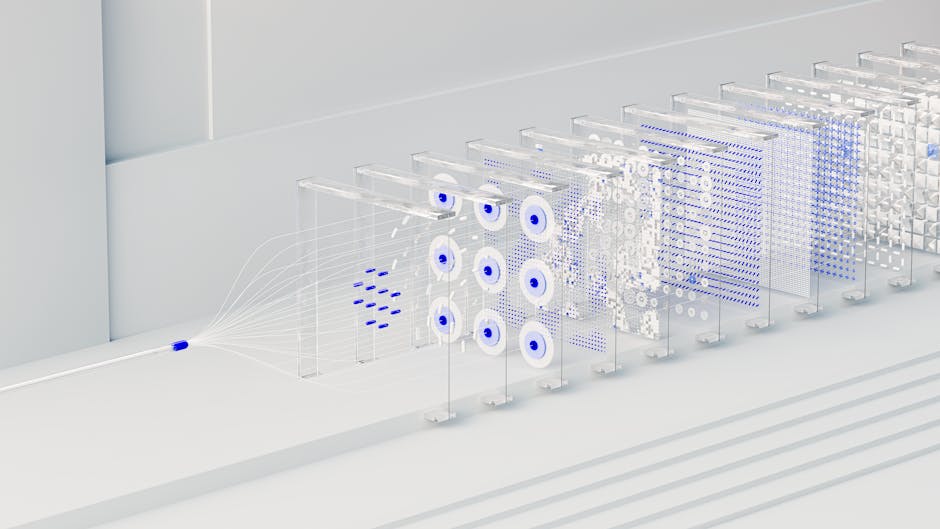

Traditional full-stack development meant a clear separation: a frontend (React, Vue, etc.), a backend API layer, and a database — all typically hosted in one or two centralized cloud regions. Edge computing flips part of this model by distributing compute workloads to nodes geographically closer to end users. In 2026, platforms like Cloudflare Workers, Vercel Edge Functions, AWS Lambda@Edge, and the newer entrant Fastly Compute have matured to the point where full-stack logic — not just static assets — can run at these distributed nodes.

What makes 2026 especially interesting is the rise of edge-compatible databases. Tools like Turso (built on libSQL), Cloudflare D1, and PlanetScale’s edge proxy layer now allow read replicas to sit at hundreds of points of presence (PoPs) globally. This means your entire stack — compute and data — can be geographically distributed without you managing a single physical server.

The Numbers That Make This Real

Let’s get concrete. According to Gartner’s infrastructure report released in early 2026, over 55% of enterprise data is now expected to be processed outside of traditional centralized data centers — up from just 10% in 2018. Meanwhile, the average latency reduction achieved by moving API logic to edge nodes ranges from 40ms to 200ms depending on geography and workload type. For consumer-facing apps, Google’s Core Web Vitals research consistently shows that a 100ms improvement in Time to First Byte (TTFB) can improve conversion rates by 1–3% — which sounds small until you’re running an e-commerce platform at scale.

The IDC Global Edge Computing Forecast (2026 edition) pegs the edge computing market at $232 billion USD, growing at a CAGR of 19.4%. This isn’t speculative infrastructure investment — it’s being driven by very real demands from IoT, autonomous vehicles, AR/VR applications, and AI inference at the edge.

Real-World Examples: From Seoul to Stockholm

Let’s look at how edge-native full-stack thinking is playing out in practice around the world.

South Korea — Kakao’s Micro-Frontend Edge Deployment: Kakao, one of South Korea’s largest tech conglomerates, began rolling out edge-deployed micro-frontend modules in late 2025. By serving personalized UI components from Cloudflare’s PoPs (South Korea has several dense ones), they reduced perceived load time for KakaoTalk Web by approximately 170ms on average for users outside Seoul. Their backend logic for notification processing now runs as Durable Objects — stateful edge workers — minimizing trips to their central database clusters.

Sweden — Klarna’s Edge-First Fraud Detection: Klarna, the buy-now-pay-later giant, has been aggressively pushing ML inference to the edge. In 2026, their fraud detection pipeline uses lightweight ONNX models deployed to edge nodes that make preliminary risk assessments before a transaction request even reaches their core backend. This reduced their average fraud-check latency from ~320ms to under 40ms, dramatically improving checkout completion rates in markets like Germany and the Netherlands.

United States — Shopify’s Hydrogen v3 on the Edge: Shopify’s Hydrogen framework (their React-based storefront solution) hit version 3 in 2026 with full edge-first support baked in. Merchants running custom storefronts now benefit from server-side rendering happening at Oxygen (Shopify’s edge hosting) nodes closest to each shopper — not in a single US-East data center. Early adopters reported TTFB improvements of 60–80% for international customers.

The Stack That Works in 2026’s Edge World

So what does a pragmatic edge-native full-stack look like right now? Here’s a setup that’s gaining serious traction among teams building production apps:

- Frontend Framework: Next.js 15 or Remix v3 — both have excellent edge runtime support with React Server Components rendering at the edge.

- Edge Runtime: Cloudflare Workers or Vercel Edge Functions — V8-isolate-based, cold-start times under 5ms, and globally distributed out of the box.

- Database Layer: Turso (edge SQLite with replication) or Cloudflare D1 for read-heavy workloads; Neon (serverless Postgres) with connection pooling via PgBouncer for write-heavy scenarios.

- Authentication: Auth.js (formerly NextAuth) with JWT-based sessions optimized for stateless edge environments — avoid session-store-heavy solutions that require central DB lookups on every request.

- State & Caching: Cloudflare KV for key-value caching, Durable Objects for stateful coordination (think collaborative editing, rate limiting).

- AI Inference at the Edge: Cloudflare Workers AI or Vercel’s AI SDK with edge-compatible model routing — great for lightweight tasks like content classification, personalization, and sentiment tagging without round-tripping to OpenAI every time.

- Observability: Axiom or Baselime for edge-compatible logging and tracing — traditional tools like DataDog have edge adapters now, but purpose-built solutions handle the distributed nature better.

The Real Challenges Nobody Talks About Enough

Here’s where I want to think through the realistic picture with you, because it’s not all smooth sailing.

Cold starts are mostly solved, but state management isn’t. V8 isolates eliminated the notorious cold start problem that plagued AWS Lambda. But managing stateful logic at the edge — things like WebSockets, session consistency across nodes, or transactional writes — remains genuinely tricky. Cloudflare’s Durable Objects help, but they introduce their own mental model complexity.

Edge environments are constrained environments. Most edge runtimes don’t support the full Node.js API surface. No native modules, limited filesystem access, memory caps (typically 128MB per isolate). If your backend relies heavily on Node-specific packages, you’ll need to audit and likely replace several dependencies.

Debugging distributed systems is harder. When something breaks in a centralized server, you look in one place. When your logic is spread across 200+ PoPs, distributed tracing becomes non-negotiable — and it adds real operational overhead.

Realistic Alternatives Based on Your Situation

Not everyone needs a pure edge-first architecture, and that’s completely fine. Let’s match the approach to the reality:

- If you’re building an internal enterprise tool with <20,000 daily users: A traditional Next.js app on Vercel or Railway with a managed Postgres instance is probably the right call. The operational overhead of edge-first isn’t worth it at this scale.

- If you’re building a media-heavy consumer app with global reach: Hybrid approach — static assets + CDN, edge middleware for auth/personalization, centralized backend for heavy compute. This is the pragmatic sweet spot for most teams in 2026.

- If you’re building IoT or real-time applications: Full edge-native architecture makes strong sense here. Latency is existential to the product, and the investment in edge infrastructure pays dividends quickly.

- If you’re a solo developer or small startup: Start with platforms like Cloudflare Pages + Workers — the free tier is genuinely generous, the DX has improved dramatically, and you can scale into a more complex architecture as revenue justifies it.

The bottom line is this: edge computing full-stack architecture in 2026 isn’t a future concept — it’s a present-tense engineering decision with real trade-offs, real benefits, and a rapidly maturing ecosystem. The teams winning today aren’t necessarily those with the most sophisticated edge setup; they’re the ones who thoughtfully matched their architecture to their actual user distribution and performance requirements.

Editor’s Comment : The most exciting thing about the edge computing conversation in 2026 isn’t the technology itself — it’s how it’s forcing developers to think more carefully about where computation happens and why. After years of “just throw it in the cloud,” we’re finally asking smarter architectural questions. If you’re starting a new full-stack project this year, I genuinely recommend spending an afternoon prototyping on Cloudflare Workers before you default to a traditional server setup. You might be surprised how much of your backend logic runs beautifully at 5ms cold start, globally distributed, at a fraction of the cost. And if it doesn’t fit — well, now you’ll know exactly why, and that’s equally valuable knowledge.

📚 관련된 다른 글도 읽어 보세요

- How to Integrate IIoT with Industrial Control Systems in 2026: A Practical Guide That Actually Works

- React Server Components in Production 2026: What Actually Works (And What Doesn’t)

- 2026년 React와 Node.js 풀스택 프로젝트 구축 완전 가이드 | 입문부터 배포까지

태그: [‘edge computing 2026’, ‘full stack architecture’, ‘Cloudflare Workers’, ‘edge native development’, ‘web performance optimization’, ‘distributed computing’, ‘modern web development’]

Leave a Reply