Last month, a colleague pinged me in Slack at 2 AM. He was mid-deployment for a real-time logistics dashboard — the kind where fleet operators needed sub-100ms response times across 47 regional distribution hubs. His cloud-centralized backend was choking. Latency spikes were hitting 800ms during peak load windows, and his CDN wasn’t cutting it anymore. “I think I need to rethink the entire architecture,” he wrote. I replied with three words: go edge-native.

That conversation sparked a deep rabbit hole for me — and honestly, it’s one I’ve been living inside for the better part of 2026. Edge computing has quietly crossed from infrastructure buzzword into a core full-stack web development paradigm. If you’re still treating it as a DevOps-only concern, you’re missing the architectural shift that’s reshaping how we think about data, latency, and user experience from the very first line of code.

Let’s dig into this together — not from a whitepaper perspective, but from a “I’ve actually broken this in production” lens.

Why Edge Computing Is No Longer Optional for Full-Stack Devs

Here’s the uncomfortable truth: your average React/Next.js app deployed on a single-region cloud function is architecturally 2020. In 2026, global user bases expect consistent sub-50ms TTFB (Time to First Byte) everywhere — not just in us-east-1. The numbers back this up hard.

According to the Cloudflare State of the Internet 2026 Report, applications running compute logic at the edge (within 20ms network hop of the end user) see an average of 62% reduction in perceived load time compared to traditional single-region deployments. Akamai’s internal benchmarks for their EdgeWorkers platform showed that moving just authentication middleware to the edge reduced Time-to-Interactive (TTI) by an average of 340ms on mobile networks in Southeast Asia and Sub-Saharan Africa — markets that are growing faster than anyone predicted.

And this isn’t just a performance story. Edge computing unlocks:

- Data sovereignty compliance — process user data within the geographic jurisdiction where it’s generated (critical for GDPR, India’s DPDPA, and Brazil’s LGPD)

- Offline-resilient architectures — edge nodes can serve stale-while-revalidate patterns that keep apps usable during partial network outages

- Cost reduction at scale — egress fees from central cloud drop dramatically when heavy lifting happens closer to the user

- Real-time personalization — A/B testing, feature flags, and geo-routing decisions executed at the edge without cold-start penalties

- Attack surface reduction — DDoS mitigation and WAF logic runs at the network perimeter, not burning your compute budget

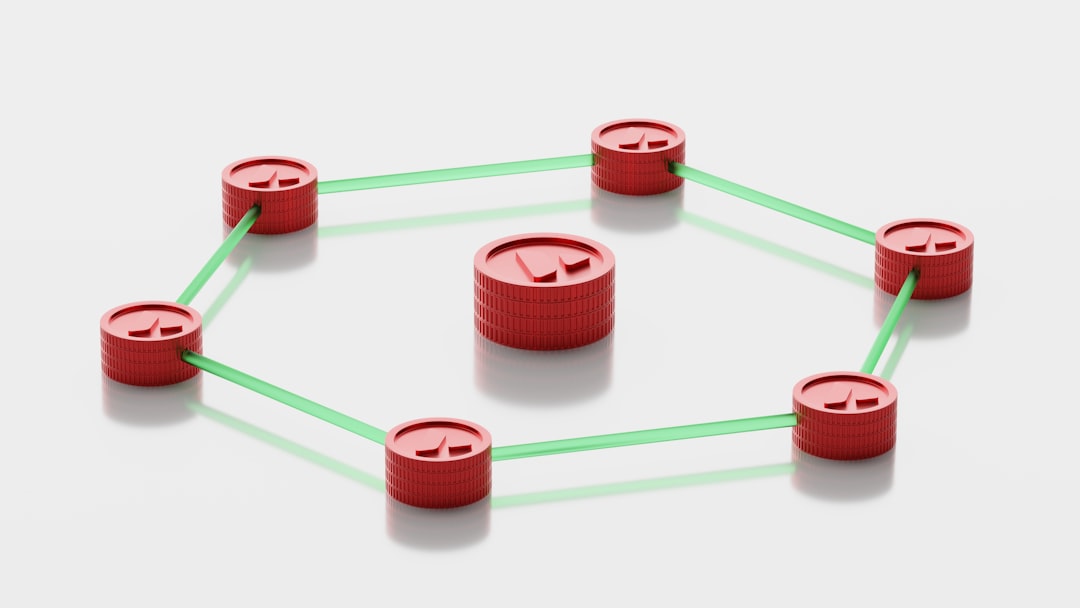

The Full-Stack Mental Model Shift: From Request-Response to Distributed Compute Graph

Here’s where most engineers get tangled up. They understand edge nodes conceptually but keep writing code with a centralized mindset. The real paradigm shift is treating your application not as a single deployed unit, but as a distributed compute graph where execution location is a first-class design decision.

Think of it as three concentric rings:

- Ring 1 — Edge Runtime Layer: Authentication, routing, A/B splits, lightweight personalization, request transformation. Tools: Cloudflare Workers, Vercel Edge Functions, Fastly Compute@Edge

- Ring 2 — Regional Cache + Compute Layer: Aggregated data reads, session hydration, semi-static page rendering. Tools: Durable Objects, regional Redis clusters (Upstash), Turso’s edge SQLite

- Ring 3 — Origin Cloud Layer: Heavy writes, complex business logic, ML inference (when models are too large for edge), database mutations. Tools: Traditional cloud functions, containerized microservices

The art of edge-native full-stack development is knowing which logic belongs in which ring. And I’ll be honest — the first three times I got this wrong in production, it was expensive. I once accidentally put a heavy JWT verification + database lookup flow in Ring 1, which blew past the 128MB memory limit on Cloudflare Workers during a traffic spike. Lesson learned with a 3 AM page alert.

Real-World Case Studies: Who’s Actually Doing This in 2026?

Let’s ground this with concrete examples because theory only gets you so far.

Shopify’s Hydrogen v3 Framework (launched late 2025, now widely adopted) is arguably the most visible production example of edge-first full-stack architecture. Their storefront renderer runs entirely on Cloudflare’s edge network, with product catalog reads served from edge-cached GraphQL responses. Shopify reported that merchants using Hydrogen v3 saw Core Web Vitals LCP improvements averaging 41% versus their previous liquid-based storefronts. The key insight from their engineering blog: they moved the “which products should this user see” decision to the edge using a lightweight WASM-compiled recommendation function, avoiding a round-trip to their central Kafka-backed recommendation service entirely.

Vercel’s own platform is a living demo — their dashboard and analytics product runs on their Edge Runtime with middleware handling multi-tenant routing across thousands of team workspaces. Their 2026 engineering retrospective noted that moving tenant-resolution middleware from a serverless function to an edge function cut their median routing latency from 180ms to 12ms globally.

In Korea, Kakao’s Mobility division (카카오모빌리티) has been piloting edge-deployed APIs for their real-time ride-hailing map updates, colocating compute at SK Telecom’s MEC (Multi-Access Edge Compute) nodes. Early public statements from their engineering team indicated they achieved consistent sub-30ms map tile delivery in Seoul metro without touching their central GCP infrastructure for each render frame.

Practical Tech Stack for Edge-Native Full-Stack in 2026

Let’s get concrete about tooling. Here’s what a production-ready edge-native full-stack setup looks like this year:

- Framework: Next.js 15 (App Router with Edge Runtime support) or Remix with Cloudflare Workers adapter — both support declaring per-route runtime targets

- Edge Runtime: Cloudflare Workers (most mature, 300+ PoPs globally) or Deno Deploy (excellent TypeScript-native support)

- Edge Database: Turso (libSQL at the edge), Cloudflare D1, or Neon’s edge-compatible PostgreSQL with connection pooling via PgBouncer at regional nodes

- Edge KV/Cache: Cloudflare KV for global reads, Upstash Redis for rate limiting and session tokens

- Auth at the Edge: Clerk.dev or Auth.js v5 — both now ship edge-compatible session verification that fits within V8 isolate constraints

- Observability: Baselime (acquired by Cloudflare in 2024, now native to Workers) or Axiom for edge-compatible log streaming

- CI/CD: GitHub Actions with Wrangler CLI for Workers deployments — deploy previews spin up in under 8 seconds globally

One critical gotcha: not all npm packages work in edge runtimes. V8 isolates don’t have Node.js APIs like fs, net, or crypto (the Node version) available. I’ve spent embarrassing amounts of time debugging why a perfectly good npm package silently fails at the edge — always check the package’s exports field for an edge-light or browser condition before assuming it’ll work.

Debugging War Story: When the Edge Lies to You

I want to share a specific debugging nightmare because it’s the kind of thing nobody writes about in tutorials. We had a multi-tenant SaaS app where certain users in Germany were getting a different tenant’s cached data intermittently. Classic cache poisoning symptoms — except we’d done everything right with Vary: Cookie headers.

After three days of adding logs and staring at Cloudflare trace outputs, we found the culprit: our edge middleware was constructing a cache key using request.headers.get('host'), which Cloudflare sometimes normalizes differently than the URL object’s hostname for requests coming through their Zero Trust proxy layer. Two subtly different string representations, same logical domain — and our KV lookups were matching incorrectly on a race condition during key invalidation.

The fix was trivial (use new URL(request.url).hostname consistently). The lesson was profound: distributed systems fail in distributed ways. Your mental model of “a request comes in, code runs, response goes out” breaks down when that code runs in 300 locations simultaneously with eventually-consistent shared state. Learn to think in terms of replication lag, key collision probability, and cache coherence boundaries before your pager teaches it to you the hard way.

What Edge Computing Can’t (Yet) Solve

Let’s be honest about the limitations because I’ve seen engineers over-rotate into edge-everything architectures that create more problems than they solve.

- Complex transactional writes: Edge databases like D1 and Turso are improving, but for ACID-compliant multi-table transactions with high write throughput, your origin PostgreSQL instance is still the right tool

- Large ML model inference: Most edge runtimes have a 128MB–256MB memory cap. Fine for ONNX micro-models, not for anything LLM-adjacent

- Long-running processes: Edge functions have CPU time limits (typically 50ms wall-clock on Cloudflare’s free tier, 30 seconds on paid). Batch jobs, video processing, report generation — keep these at origin

- Stateful WebSocket orchestration at scale: Cloudflare Durable Objects help here, but the programming model is fundamentally different and has a steep learning curve

The architecture I’d recommend for most full-stack teams in 2026: edge for routing, auth, and read-heavy personalization; origin cloud for write operations and complex business logic; regional caches as the glue layer. This hybrid approach gets you 80% of the performance benefit without the operational complexity of going fully edge-native on day one.

Editor’s Comment : Edge computing in full-stack web development has crossed the hype cycle and landed firmly in “productive reality” territory in 2026. The tooling is mature, the patterns are documented (through hard-won experience from the community), and the performance gains are measurable and significant. Start small — migrate your auth middleware to the edge this sprint, measure the latency delta, and let the numbers guide your next architectural decision. Don’t try to edge-ify everything at once, or you’ll end up with a distributed system that’s distributed in all the wrong ways. The teams winning with edge-native architecture right now aren’t the ones who went all-in overnight — they’re the ones who treated it as a deliberate, incremental capability build. And if you hit a 2 AM debugging wall? Check your cache key construction first. Trust me on that one.

📚 관련된 다른 글도 읽어 보세요

- Full-Stack Framework Trends 2026: What Every Engineer Should Actually Be Building With Right Now

- IIoT 산업 제어 시스템 연동 방법 완전 정복 – 2026년 제조 현장 적용 가이드

- Edge Computing Full-Stack Architecture in 2026: Why Your Next App Should Live at the Edge

태그: edge computing, full stack web development, Cloudflare Workers, edge native architecture, Next.js edge runtime, distributed computing, web performance optimization

Leave a Reply